Interaction Design

The interactions are designed with available interaction features of the HoloLens device considering the guidelines of interaction design for people on ASD (Hussain, Abdullah, Husni, & Mkpojiogu, 2016).

The user experience flow starts with opening the application in which the My Ho Me is already integrated. At first the main-menu button should be placed in the surrounding of the user. Secondly, a category from the main-menu should be chosen, then a hologram needs to be selected from the sub-menu of the chosen category. If the user wants to exit the interface, then the main-menu button needs to be closed.

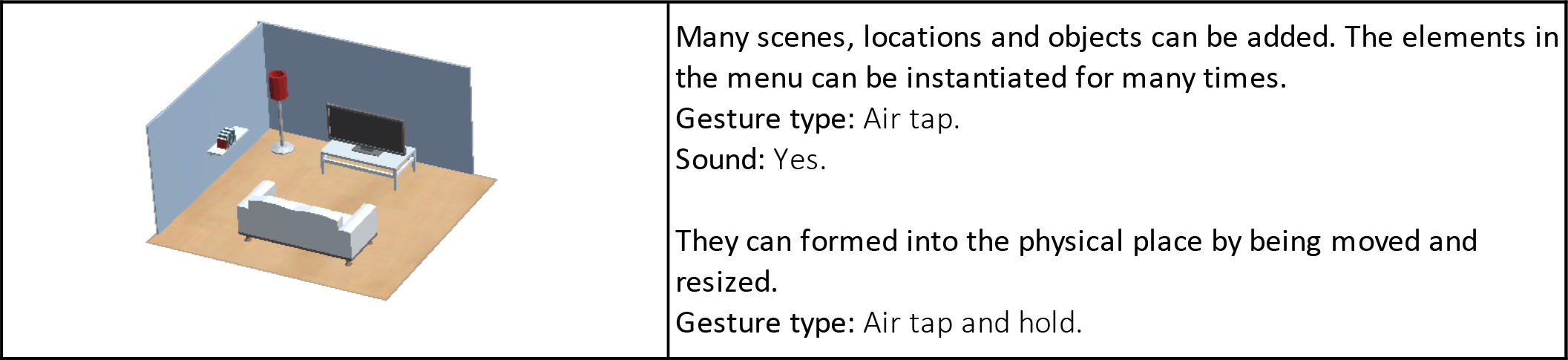

Users can choose the objects/holograms they wanted to add to the room from the sub-menus. When the object/hologram is placed in a 3D environment, its coordinates are measured and locked by HoloLens. So, these objects are called “World-locked contents”. That means even the user walk through the place, the objects/holograms will remain in the same spots where the user puts first.

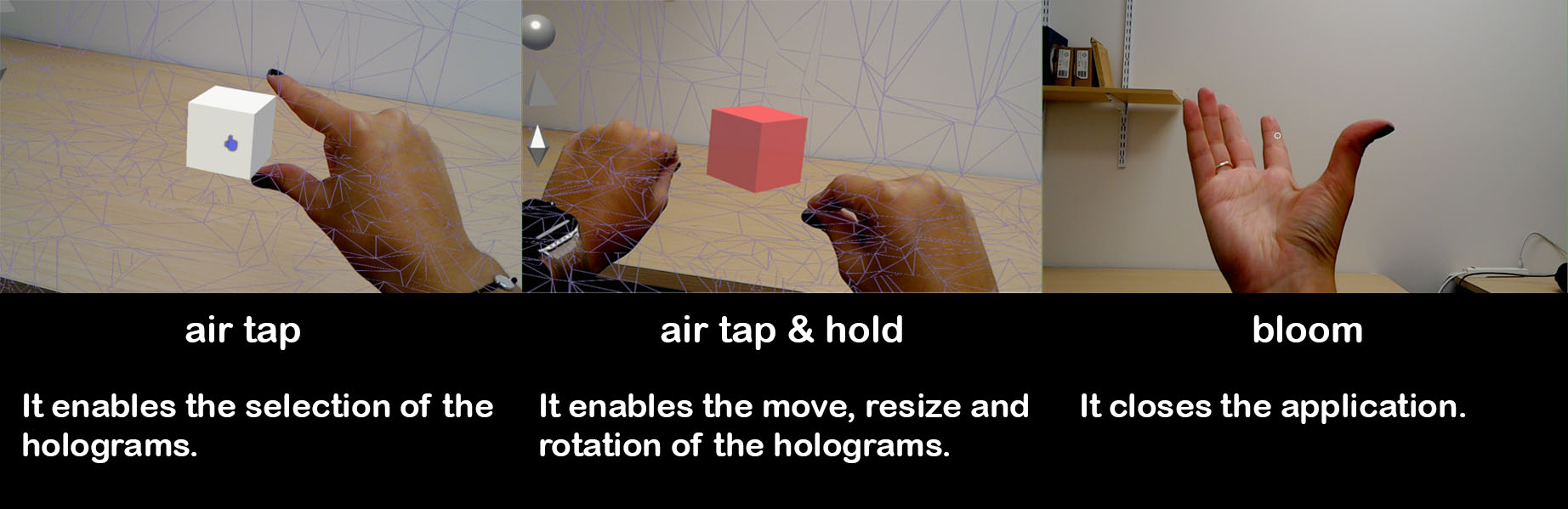

Hand gestures are one of the main reasons why the HoloLens device is selected for this study. Interacting with an interface via hand movements is assumed as a natural interaction. Thus, there is no need to learn how to use the device, and users do not need to think hard what to do with the buttons or objects they see in the interface. There is also voice input available for HoloLens. Nevertheless, since most autistic people may have problems with voice communication (Smith, Goddard, & Fluck, 2004), voice commands are not used for the interaction design.

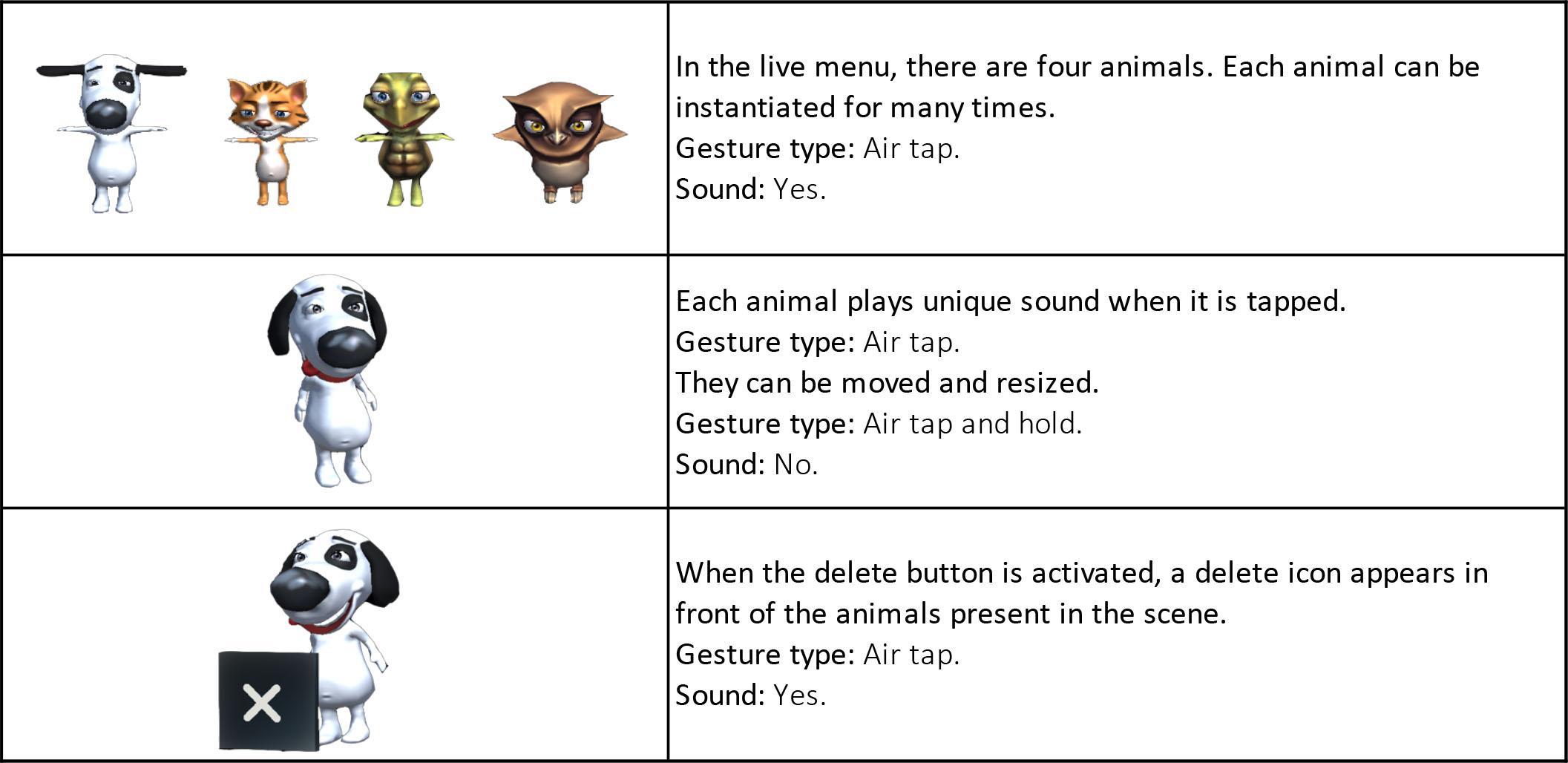

In My Ho Me, three hand gestures are used that are accessible for HoloLens: bloom, air tap, and tap and hold (Figure 9).

Figure 9: Hand gestures.

Feedback mechanisms

Sound:

Each button has a sound feedback, when they are activated. Every animal has a unique sound effect to make the user feel like they are alive. Also, each sub-menu items of the laugh menu plays unique sound effects to demonstrate the ambiance. Moreover, when an object is instantiated in the scene, it also comes with a sound to get the attention of the user.

Cursor:

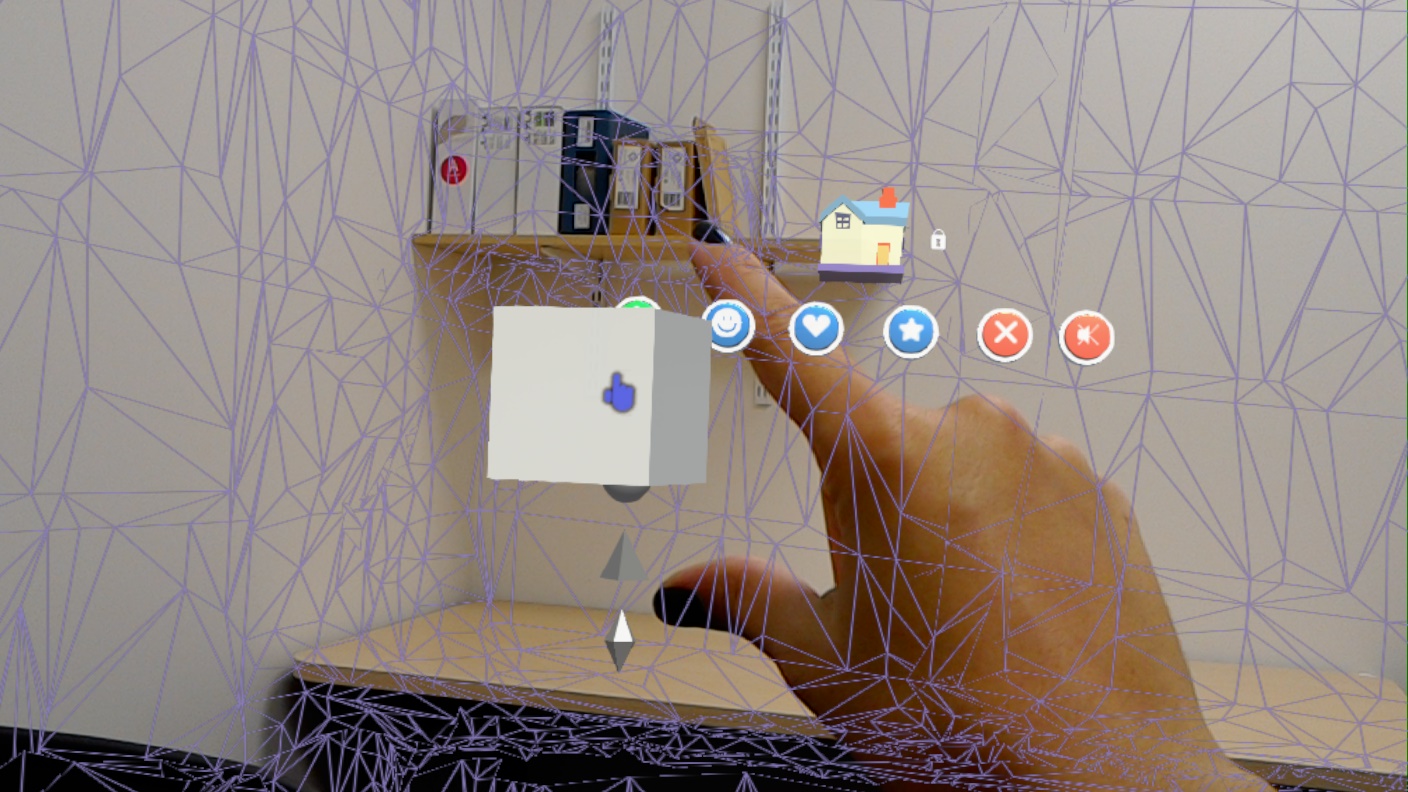

The shape of the cursor is changing from circle to hand ready, when it detects the users finger (Figure 10).

Figure 10: Cursor feedback with changing icons.

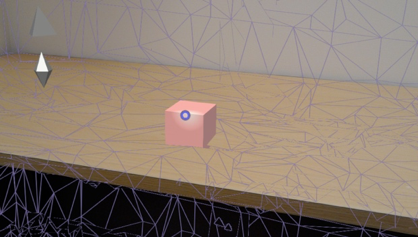

The light appears around the cursor when it detects a virtual surface (Figure 11).

Figure 11: Cursor feedback with light.

Animations: Each instantiated animal comes with an animation. Although the animals in the sub-menu are still, they are moving all the time.

Movement: When the toggle-buttons are tapped on, they are moving to perform the pressed button action. And all the objects that are instantiated in the scene moves, when they are tapped on. In that way, the user can separate the objects that functions as button and the objects that are present and interactable in the scene.

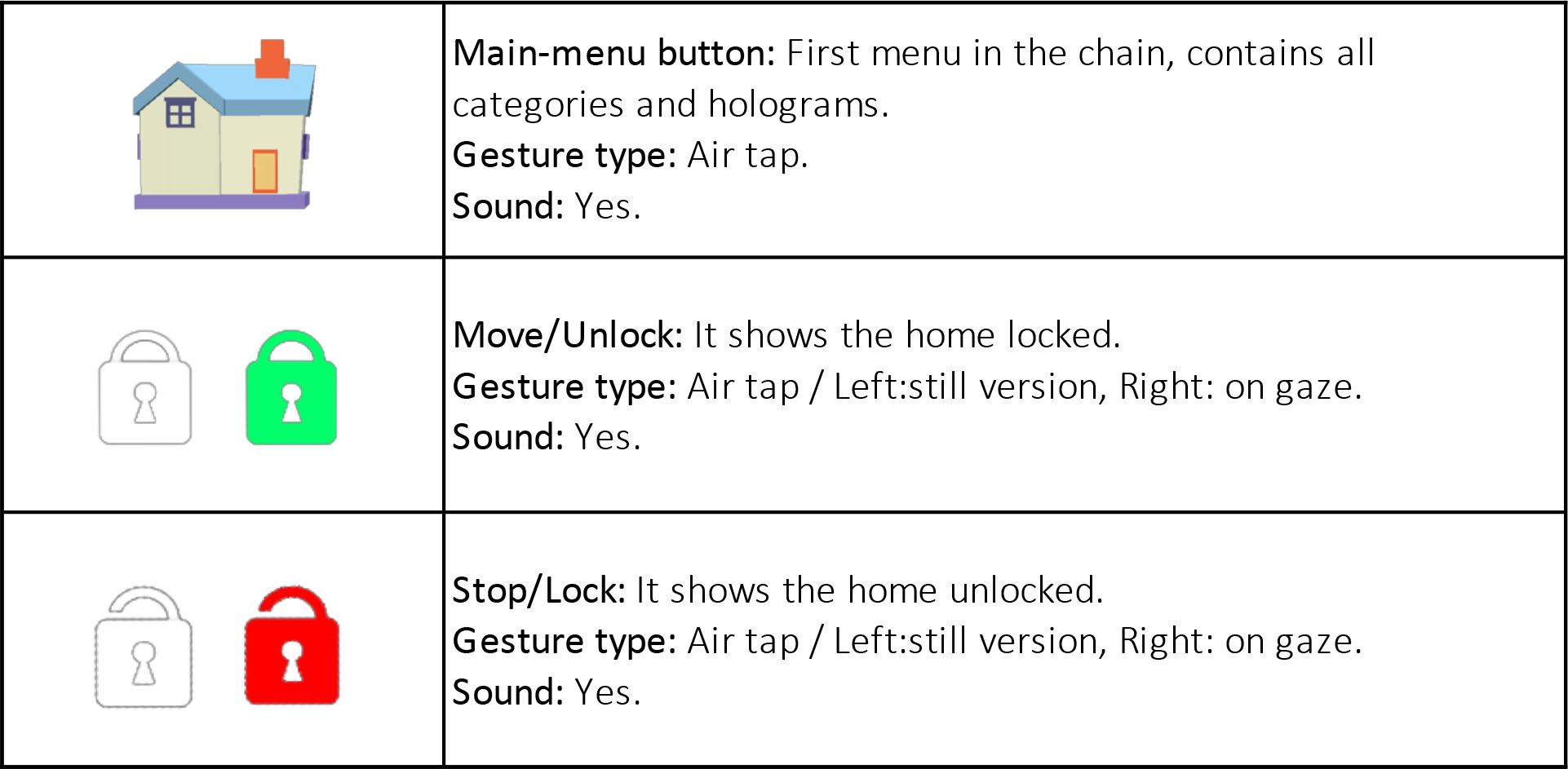

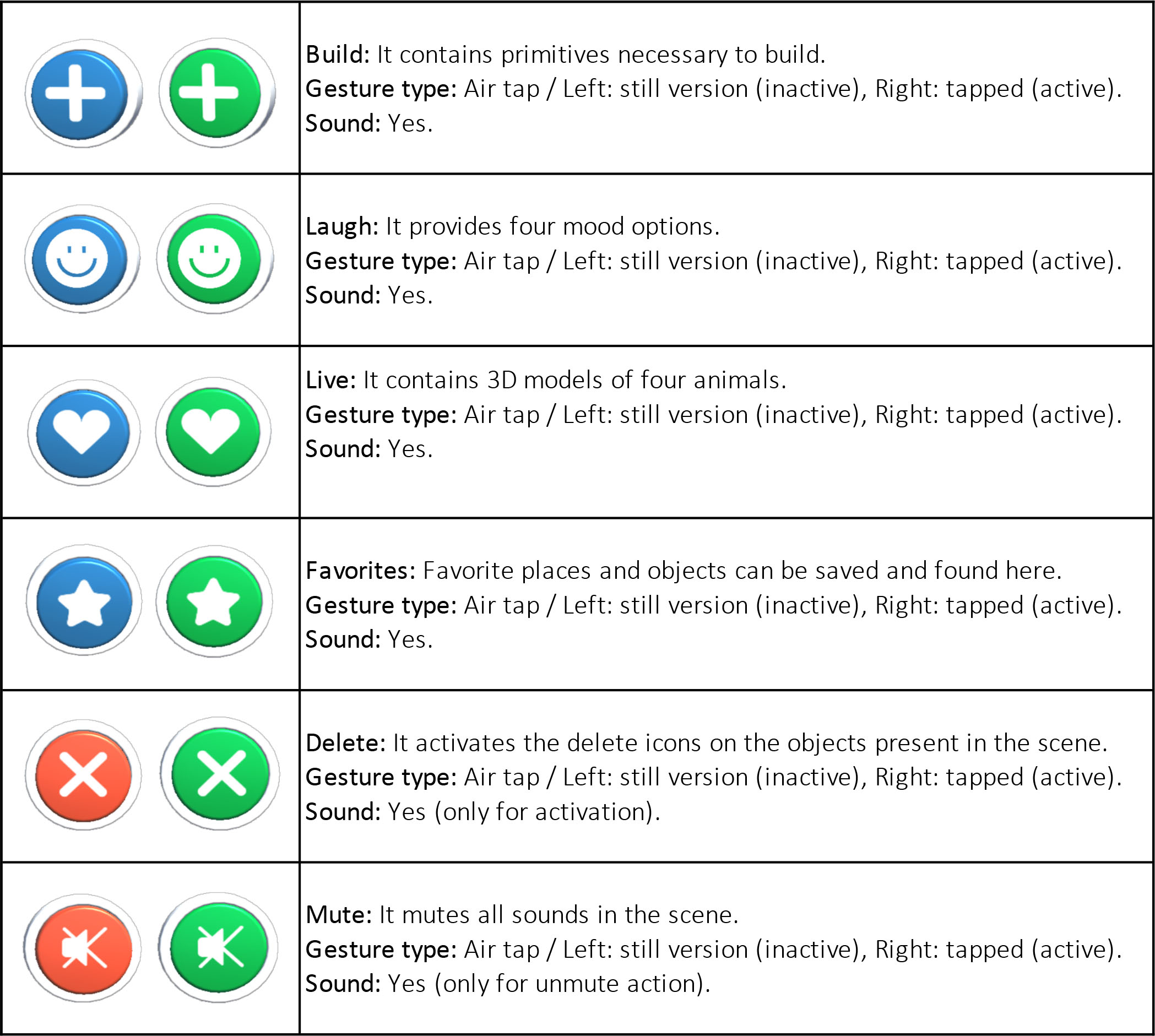

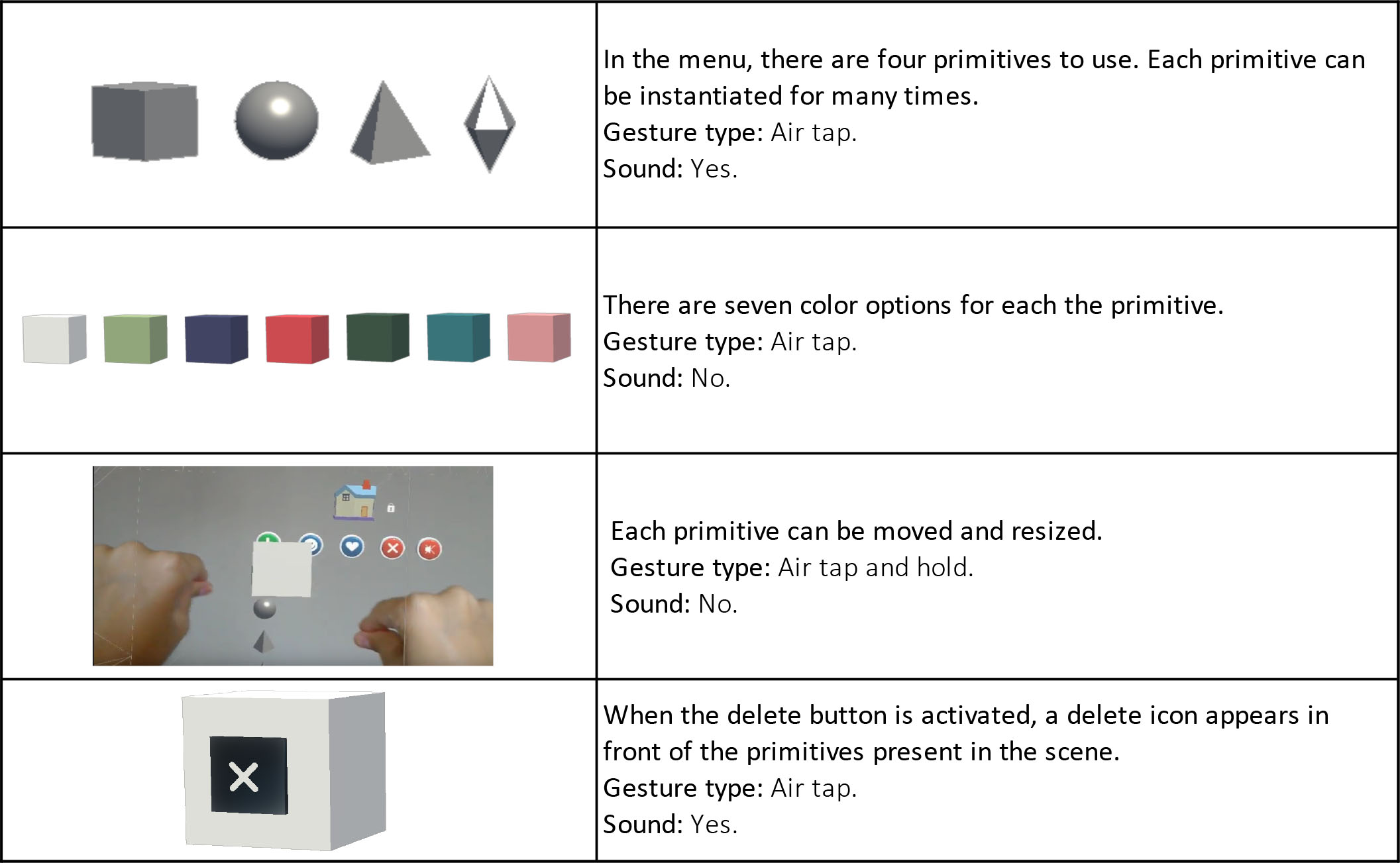

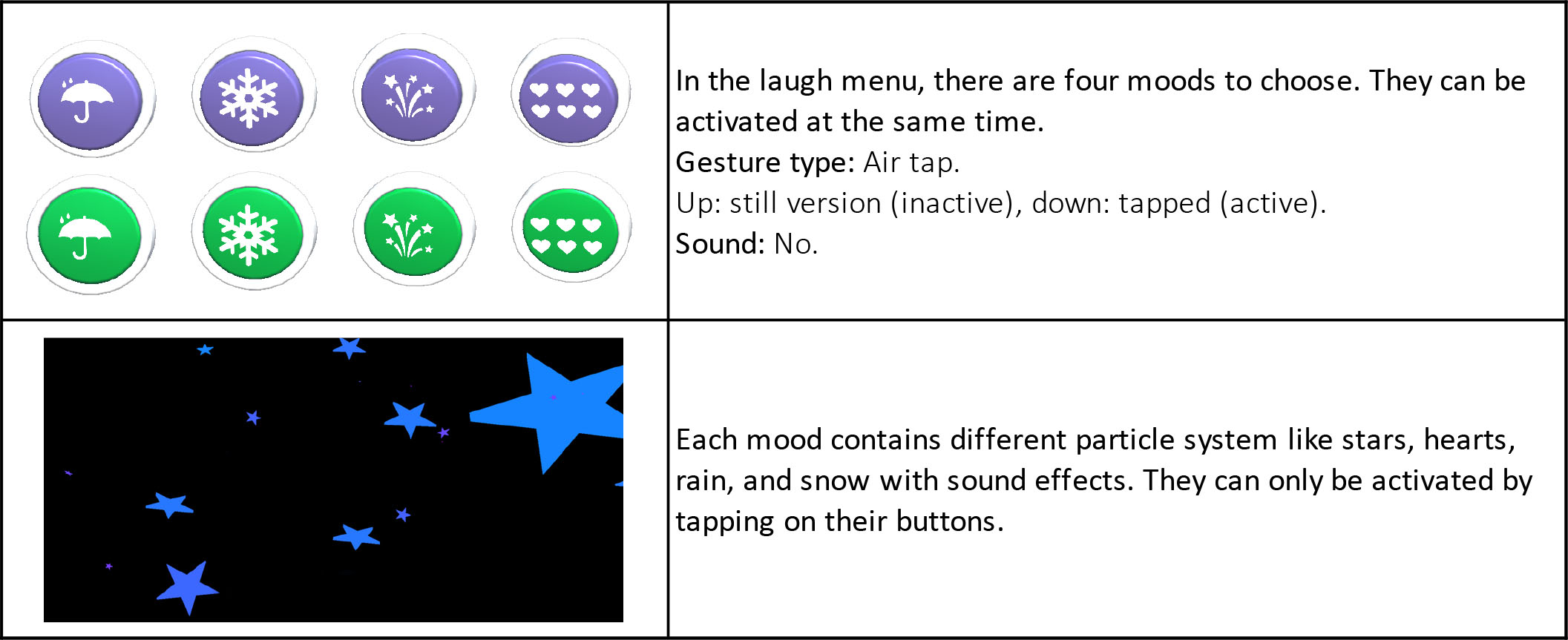

Button function list

Button functions can be studied in six parts. First part is the landing scene of the interface (Table 1). Main-menu is the second part with six toggle-buttons (Table 2). In the other parts, sub-menu functions can be explained in detail (Table 3, Table 4, Table 5, Table 6).

Table 1: Landing scene button functions.

Table 2: Main-menu button actions.

Table 3: Build-menu button actions.

Table 4: Laugh-menu button actions.

Table 5: Live-menu button actions.

Table 6: Favorites menu button actions.

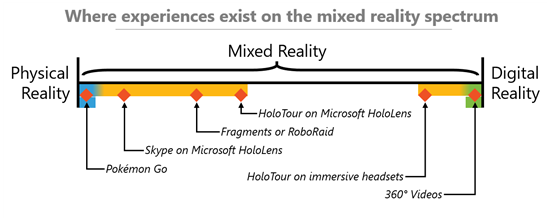

The My Ho Me is developed for HoloLens holographic device which has the AR capabilities but more. Hence, the experiences in HoloLens can be more immersive than the typical AR devices like mobile phones. But today in 2018, not all the apps for HoloLens is using the whole features of the device has, so they might be experienced more like in AR (Figure 12).

Figure 12: Mixed Reality Spectrum with HoloLens applications (URL-21).

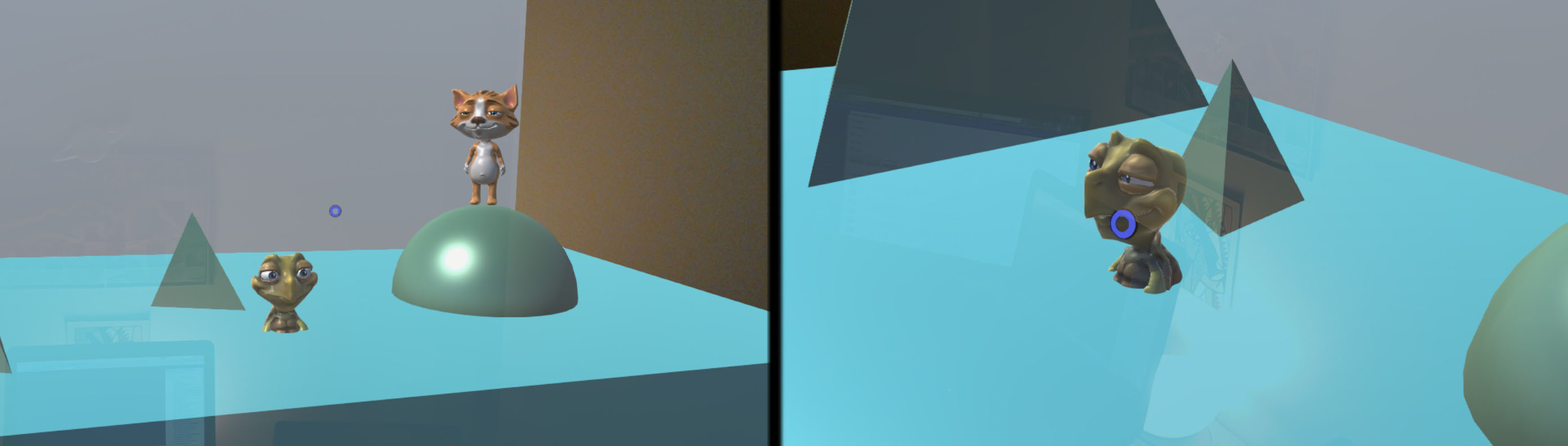

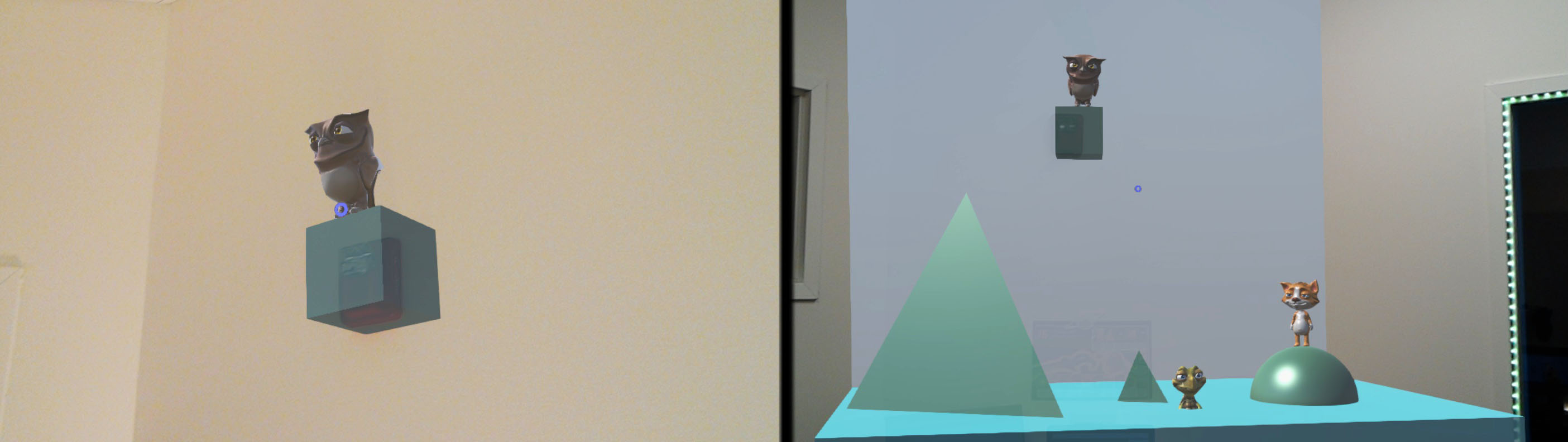

The proposed spatial interface in this study is defined as an MR interface, and it is assumed to be located between the Fragments and RoboRaid and HoloTour applications on the mixed reality spectrum (Figure 13). The figures below present the MR characteristics of My Ho Me interface to show both AR and AV capabilities.

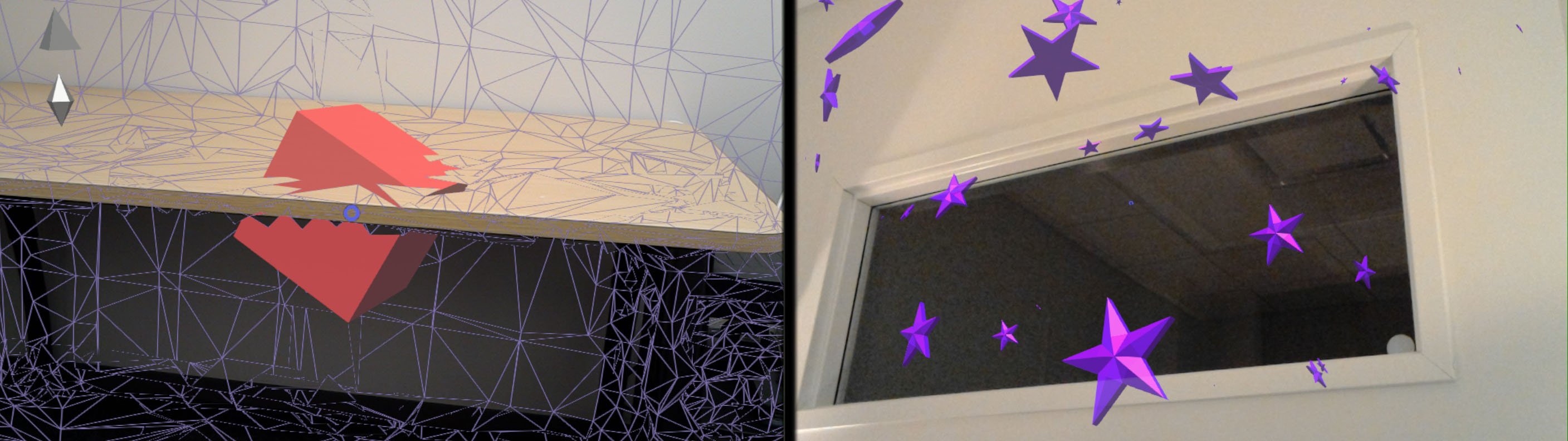

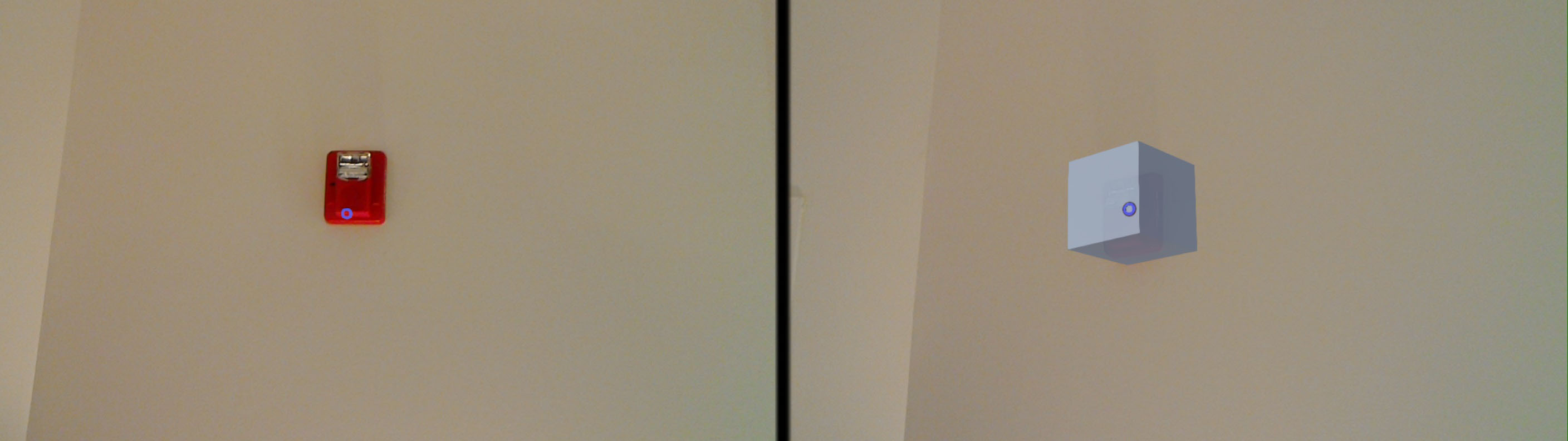

The holograms in the interface can recognize the space and interact with them (Figure 14). In Figure 14 while the red cube can be experienced in the real world, the physical table is also present in the virtual world of the red cube. Therefore, the cube collides with the table. Also, in Figure 14 the star particles can detect the elements in the physical room, so they bounce when they hit the real wall, floor, or table. That means the virtual elements and the physical elements are sharing a common space. In Figure 15 the window and the fire alarm in the room is blocked with opaque holograms. If the user keeps adding holograms into the real world, the physical world will turn into a hybrid space with full of virtual elements(Figure 16, Figure 17). To sum up, My Ho Me interface can be used for both as an AR app just to place some virtual content in the physical space, and also as an AV app to turn the physical place into a mixed place with full of virtual content. And this virtuality can affect the perception of the user through the real space.

Figure 13: Left: Red cube collides with the table. Right: Particles collide with the space.

Figure 14: The fire alarm is blocked by a cube.

Figure 15: More holograms are added to the physical space.