Results

The intention of the study was to provide an open-source spatial interface toolkit to be used in MR applications for autistic people with sensory difficulties. During the study, a spatial interface is designed, and the prototype is developed and evaluated. The interface is designed and developed based on the idea of Home Base intervention method. In the Home Base method, when the autistic people with SPD feels like s/ he is about to have a meltdown because of the disturbing elements in his surrounding, he uses an area in a specific place that he uses everyday like school or work, to relax. The interface aims to provide the same calming effect of Home Base, but not in just specific places, in everywhere that he can access his HoloLens. After some iterations are made, the prototype is evaluated, and the results are explained below.

The expert-based evaluations are conducted with the cognitive walkthrough and heuristic evaluations which introduced some issues that users may have during the interaction of the interface. In addition, some design details that needs to be analyzed and developed further are addressed. The analysis shows the features and functions that needs to be discussed for the next version of the prototype as follows:

• Copy-paste function

• Location indicator for the home-button

• Use of text to support the visuals

• Color scheme change

• Automated error correction

• Instructions on how to use

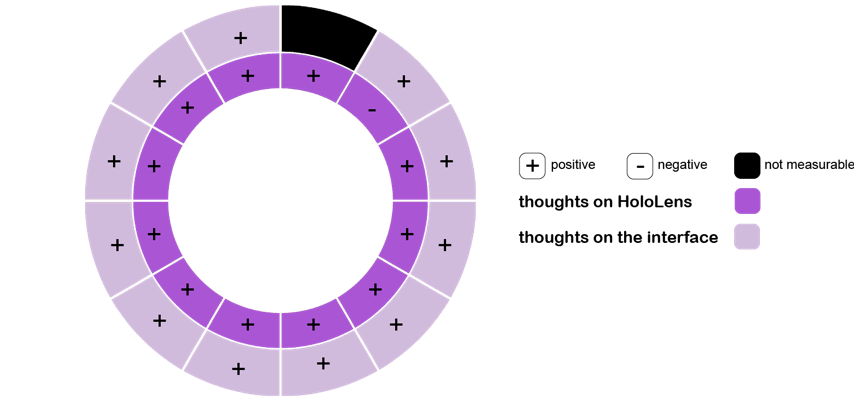

In the pilot study, the data collected shows that most of the autistic kids (on average age is 10) are unfamiliar to the HoloLens device and mixed reality technologies. The analysis shows that, eleven participants out of twelve wanted to try HoloLens and eleven participants told that they liked the interface, as shown in Figure 1. One participant did not share his thoughts on the interface. Only one participant out of twelve hesitated to try the device. Other eleven participants were excited about the device and wanted to try it immediately.

Figure 29: The thoughts of the participants on HoloLens and the interface.

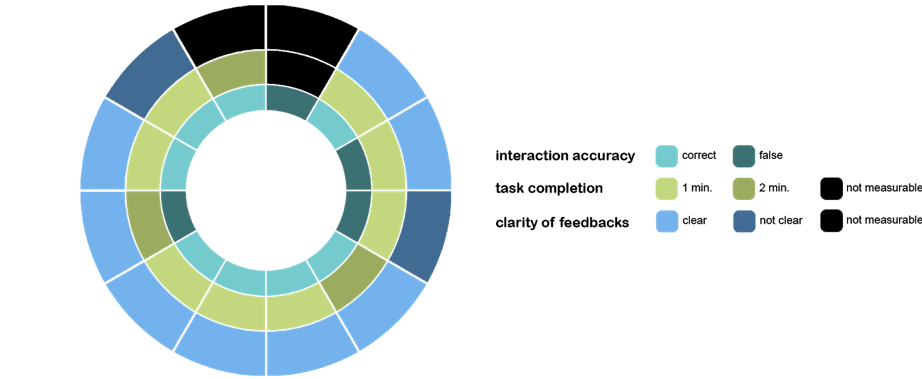

The interaction accuracy, task completion time and perception of the feedbacks are analysed and plotted on the graph, as shown in Figure 4.2. Based on the screen-recordings and our observation during the experiments, we determined that eight participants out of twelve were able to interact with the interface by using the hand gesture. And two participants from the remaining four were willing to interact. Nevertheless, they could not aim the cursor. Two participants did not try to use hand gestures, only watched the virtual objects as they stayed still in the scene. In addition, the average time to complete the task is 1 minute 27 seconds. Eight participants out of twelve completed the test in one minute, and two participants from the remaining four completed in 2 minutes. Only one participant could not complete the test because of the anxiety. The analysis of the clarity of the feedbacks shows that eight participants out of twelve understood the feedbacks. According to the interaction styles of four participants, sound feedback was more efficient and understandable. Nevertheless, the other four participants responded better when they recognized the button actions. One of the remaining four participants did not understand the feedbacks since he preferred to watch the virtual objects. The remaining three could not use the hand gestures, so the feedback effect is not measurable, as shown in Figure 30. As a limitation of the study, the learning curve for AR and MR gadgets for the participants cannot be measured in these circumstances, because there is only one HoloLens available and the device is not affordable for now in 2018.